Augmenting LLMs with Computational Tools and the Future of Technical Work

Can I trust large language models yet?

This is a short follow-up to my previous article, Some Thoughts on Consciousness, AI, and ChatGPT. Just today, on Stephen Wolfram's blog he posted the article ChatGPT Gets Its “Wolfram Superpowers”!, and just to put it lightly, I am impressed.

For context, OpenAI just announced ChatGPT plugins, described as:

...tools designed specifically for language models with safety as a core principle, and help ChatGPT access up-to-date information, run computations, or use third-party services.

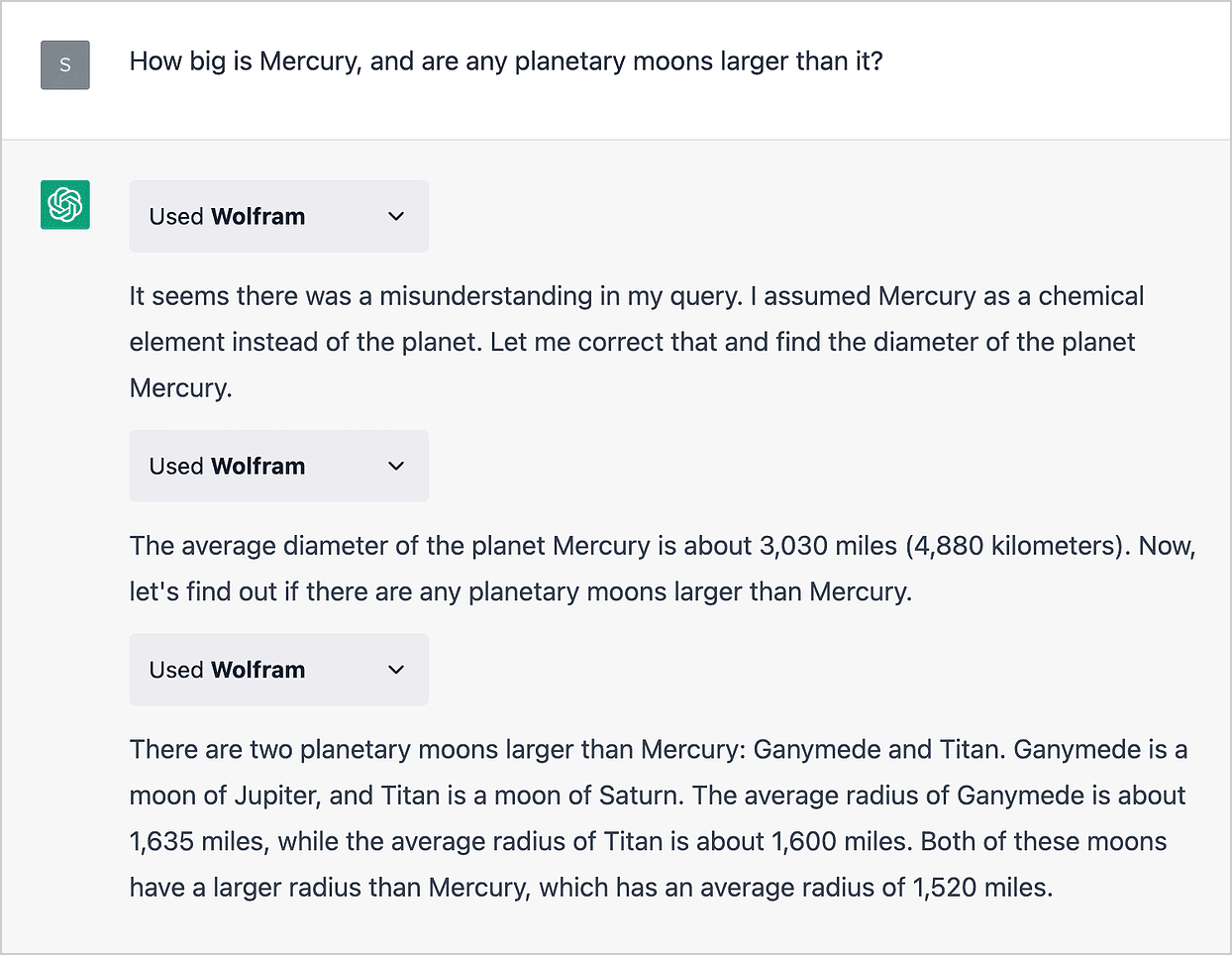

One of the initial plugins being offered is the Wolfram plugin, which connects ChatGPT to Wolfram Alpha and Wolfram Language. The above article goes into how this plugin functions, which allows ChatGPT to use the mentioned tools to run computational tasks and query data.

I have been interested in AI and how it could augment the productivity of workers. I believe a great future exists where people are able to leverage the capabilities of AI in order to be more productive workers, rather than being replaced by AI outright. I come from an engineering background, and up until now, I have not been able to see how this could work in my field (with the current capabilities of AI). However, with the recent announcement of ChatGPT plugins and the showcase of the Wolfram plugin, I am beginning to see how this could work for someone like me.

In my previous article, I mentioned that a big danger of current LLMs are that they cannot be trusted to provide accurate information. They are prone to hallucination, which is the phenomenon of an LLM providing confident output despite that output having no basis on reality nor its training data.

These models are not agents, they do not know how to act. More importantly, they cannot understand the difference acting poorly and acting well...

However, ChatGPT (and LLMs in general) are able to use computational tools such as Wolfram Alpha on our behalf in order to provide useful and accurate outputs. If I ask for some math-related question, I do not need to worry much about the veracity of the response, as I can verify that the response was interpreted correctly from Wolfram Alpha.

Of course LLMs are still prone to misinterpreting a user's input or the query response from a computational tool, but the user can verify themselves how accurate the response is. A user would be able to both see the query sent to the computational tool as well as the raw result that is fed back to the LLM. This provides an easy way of verifying an output. Without using an external tool, how else would a response from an LLM be verified? Checking the individual weights of the model?

I am excited to see the further developments of AI using proper computational tools to get results that are both trustworthy and verifiable, especially to help augment my own work in my field. I will probably return to the concept of a future where AIs are primarily used as augmenting tools rather than outright replacements of workers in a future article, as that potential future is starting to become a little bit more real.

I see what’s happening now as a historic moment. For well over half a century the statistical and symbolic approaches to what we might call “AI” evolved largely separately. But now, in ChatGPT + Wolfram they’re being brought together. And while we’re still just at the beginning with this, I think we can reasonably expect tremendous power in the combination—and in a sense a new paradigm for “AI-like computation”, made possible by the arrival of ChatGPT, and now by its combination with Wolfram|Alpha and Wolfram Language in ChatGPT + Wolfram.

In other news, an interesting paper just came out: Sparks of Artificial General Intelligence: Early experiments with GPT-4. The conclusions of this paper seems to be that OpenAI's new GPT-4 model is an early version of an AGI due to its ability to complete a variety of tasks. In my previous article I talked about the concept of AGIs briefly. Specifically, I mentioned that I do not believe that simply increasing the scale of the compute and/or training data of LLMs would lead to an AGI, but these researchers have the opposite opinion. Discussions on this paper also have their skeptics, so I'm definitely not the only one not convinced. I still stand by my belief, but of course I am happy to have my beliefs changed somehow. Though this debate really depends on how one actually defines what an artificial general intelligence is, and what its capabilities should be.

Thanks for reading, until next time.